本文介绍如何用Paddle 2.0高层API微调RepVGG模型。先导入必要包,构建RepVGG模型及模块,封装模型预设配置,通过paddle.Model配置模型,加载Cifar10数据集,经训练后用model.predict_batch对图片预测,还可借助VisualDL可视化训练数据。

☞☞☞AI 智能聊天, 问答助手, AI 智能搜索, 免费无限量使用 DeepSeek R1 模型☜☜☜

# 导入 Paddleimport paddleimport paddle.nn as nnfrom paddle.static import InputSpecfrom paddle.vision.datasets import Cifar10from paddle.vision.transforms import Resize, CenterCrop, Transpose, Normalize, Compose# 导入其他包import cv2import numpy as npfrom IPython.display import Image# 开启静态图# 也可以直接用动态图进行模型训练paddle.enable_static()

# 卷积 + 批归一化class ConvBN(nn.Layer):

def __init__(self, in_channels, out_channels, kernel_size, stride, padding, groups=1):

super(ConvBN, self).__init__()

self.conv = nn.Conv2D(in_channels=in_channels, out_channels=out_channels,

kernel_size=kernel_size, stride=stride, padding=padding, groups=groups, bias_attr=False)

self.bn = nn.BatchNorm2D(num_features=out_channels) def forward(self, x):

y = self.conv(x)

y = self.bn(y) return y# RepVGG 模块class RepVGGBlock(nn.Layer):

def __init__(self, in_channels, out_channels, kernel_size,

stride=1, padding=0, dilation=1, groups=1, padding_mode='zeros'):

super(RepVGGBlock, self).__init__()

self.in_channels = in_channels

self.out_channels = out_channels

self.kernel_size = kernel_size

self.stride = stride

self.padding = padding

self.dilation = dilation

self.groups = groups

self.padding_mode = padding_mode assert kernel_size == 3

assert padding == 1

padding_11 = padding - kernel_size // 2

self.nonlinearity = nn.ReLU()

self.rbr_identity = nn.BatchNorm2D(

num_features=in_channels) if out_channels == in_channels and stride == 1 else None

self.rbr_dense = ConvBN(in_channels=in_channels, out_channels=out_channels,

kernel_size=kernel_size, stride=stride, padding=padding, groups=groups)

self.rbr_1x1 = ConvBN(in_channels=in_channels, out_channels=out_channels,

kernel_size=1, stride=stride, padding=padding_11, groups=groups) def forward(self, inputs):

if not self.training: return self.nonlinearity(self.rbr_reparam(inputs)) if self.rbr_identity is None:

id_out = 0

else:

id_out = self.rbr_identity(inputs) return self.nonlinearity(self.rbr_dense(inputs) + self.rbr_1x1(inputs) + id_out) def eval(self):

if not hasattr(self, 'rbr_reparam'):

self.rbr_reparam = nn.Conv2D(in_channels=self.in_channels, out_channels=self.out_channels, kernel_size=self.kernel_size, stride=self.stride,

padding=self.padding, dilation=self.dilation, groups=self.groups, padding_mode=self.padding_mode)

self.training = False

kernel, bias = self.get_equivalent_kernel_bias()

self.rbr_reparam.weight.set_value(kernel)

self.rbr_reparam.bias.set_value(bias) for layer in self.sublayers():

layer.eval() def get_equivalent_kernel_bias(self):

kernel3x3, bias3x3 = self._fuse_bn_tensor(self.rbr_dense)

kernel1x1, bias1x1 = self._fuse_bn_tensor(self.rbr_1x1)

kernelid, biasid = self._fuse_bn_tensor(self.rbr_identity) return kernel3x3 + self._pad_1x1_to_3x3_tensor(kernel1x1) + kernelid, bias3x3 + bias1x1 + biasid def _pad_1x1_to_3x3_tensor(self, kernel1x1):

if kernel1x1 is None: return 0

else: return nn.functional.pad(kernel1x1, [1, 1, 1, 1]) def _fuse_bn_tensor(self, branch):

if branch is None: return 0, 0

if isinstance(branch, ConvBN):

kernel = branch.conv.weight

running_mean = branch.bn._mean

running_var = branch.bn._variance

gamma = branch.bn.weight

beta = branch.bn.bias

eps = branch.bn._epsilon else: assert isinstance(branch, nn.BatchNorm2D) if not hasattr(self, 'id_tensor'):

input_dim = self.in_channels // self.groups

kernel_value = np.zeros(

(self.in_channels, input_dim, 3, 3), dtype=np.float32) for i in range(self.in_channels):

kernel_value[i, i % input_dim, 1, 1] = 1

self.id_tensor = paddle.to_tensor(kernel_value)

kernel = self.id_tensor

running_mean = branch._mean

running_var = branch._variance

gamma = branch.weight

beta = branch.bias

eps = branch._epsilon

std = (running_var + eps).sqrt()

t = (gamma / std).reshape((-1, 1, 1, 1)) return kernel * t, beta - running_mean * gamma / std# RepVGG 模型class RepVGG(nn.Layer):

def __init__(self, num_blocks, width_multiplier=None, override_groups_map=None, in_channels=3, class_dim=1000):

super(RepVGG, self).__init__() assert len(width_multiplier) == 4

self.override_groups_map = override_groups_map or dict() assert 0 not in self.override_groups_map

self.in_planes = min(64, int(64 * width_multiplier[0]))

self.stage0 = RepVGGBlock(

in_channels=in_channels, out_channels=self.in_planes, kernel_size=3, stride=2, padding=1)

self.cur_layer_idx = 1

self.stage1 = self._make_stage( int(64 * width_multiplier[0]), num_blocks[0], stride=2)

self.stage2 = self._make_stage( int(128 * width_multiplier[1]), num_blocks[1], stride=2)

self.stage3 = self._make_stage( int(256 * width_multiplier[2]), num_blocks[2], stride=2)

self.stage4 = self._make_stage( int(512 * width_multiplier[3]), num_blocks[3], stride=2)

self.gap = nn.AdaptiveAvgPool2D(output_size=1)

self.linear = nn.Linear(int(512 * width_multiplier[3]), class_dim) def _make_stage(self, planes, num_blocks, stride):

strides = [stride] + [1]*(num_blocks-1)

blocks = [] for stride in strides:

cur_groups = self.override_groups_map.get(self.cur_layer_idx, 1)

blocks.append(RepVGGBlock(in_channels=self.in_planes, out_channels=planes, kernel_size=3,

stride=stride, padding=1, groups=cur_groups))

self.in_planes = planes

self.cur_layer_idx += 1

return nn.Sequential(*blocks) def forward(self, x):

out = self.stage0(x)

out = self.stage1(out)

out = self.stage2(out)

out = self.stage3(out)

out = self.stage4(out)

out = self.gap(out)

out = paddle.flatten(out, start_axis=1)

out = self.linear(out) return out# 模型超参数配置optional_groupwise_layers = [2, 4, 6, 8, 10, 12, 14, 16, 18, 20, 22, 24, 26]

g2_map = {l: 2 for l in optional_groupwise_layers}

g4_map = {l: 4 for l in optional_groupwise_layers}# 各种模型预设配置def RepVGG_A0(**kwargs):

return RepVGG(num_blocks=[2, 4, 14, 1], width_multiplier=[0.75, 0.75, 0.75, 2.5], override_groups_map=None, **kwargs)def RepVGG_A1(**kwargs):

return RepVGG(num_blocks=[2, 4, 14, 1], width_multiplier=[1, 1, 1, 2.5], override_groups_map=None, **kwargs)def RepVGG_A2(**kwargs):

return RepVGG(num_blocks=[2, 4, 14, 1], width_multiplier=[1.5, 1.5, 1.5, 2.75], override_groups_map=None, **kwargs)def RepVGG_B0(**kwargs):

return RepVGG(num_blocks=[4, 6, 16, 1], width_multiplier=[1, 1, 1, 2.5], override_groups_map=None, **kwargs)def RepVGG_B1(**kwargs):

return RepVGG(num_blocks=[4, 6, 16, 1], width_multiplier=[2, 2, 2, 4], override_groups_map=None, **kwargs)def RepVGG_B1g2(**kwargs):

return RepVGG(num_blocks=[4, 6, 16, 1], width_multiplier=[2, 2, 2, 4], override_groups_map=g2_map, **kwargs)def RepVGG_B1g4(**kwargs):

return RepVGG(num_blocks=[4, 6, 16, 1], width_multiplier=[2, 2, 2, 4], override_groups_map=g4_map, **kwargs)def RepVGG_B2(**kwargs):

return RepVGG(num_blocks=[4, 6, 16, 1], width_multiplier=[2.5, 2.5, 2.5, 5], override_groups_map=None, **kwargs)def RepVGG_B2g2(**kwargs):

return RepVGG(num_blocks=[4, 6, 16, 1], width_multiplier=[2.5, 2.5, 2.5, 5], override_groups_map=g2_map, **kwargs)def RepVGG_B2g4(**kwargs):

return RepVGG(num_blocks=[4, 6, 16, 1], width_multiplier=[2.5, 2.5, 2.5, 5], override_groups_map=g4_map, **kwargs)def RepVGG_B3(**kwargs):

return RepVGG(num_blocks=[4, 6, 16, 1], width_multiplier=[3, 3, 3, 5], override_groups_map=None, **kwargs)def RepVGG_B3g2(**kwargs):

return RepVGG(num_blocks=[4, 6, 16, 1], width_multiplier=[3, 3, 3, 5], override_groups_map=g2_map, **kwargs)def RepVGG_B3g4(**kwargs):

return RepVGG(num_blocks=[4, 6, 16, 1], width_multiplier=[3, 3, 3, 5], override_groups_map=g4_map, **kwargs)# 设置模型的输入和标签images = InputSpec(shape=[-1, 3, 32, 32], dtype='float32', name='images') labels = InputSpec(shape=[-1], dtype='int64', name='labels')# 初始化模型model = paddle.Model(RepVGG_A0(in_channels=3, class_dim=10), inputs=images, labels=labels)# 加载预训练模型参数model.load(path='data/data69662/RepVGG_A0', skip_mismatch=True, reset_optimizer=True)# 打印模型结构model.summary()# 配置优化器opt = paddle.optimizer.Adam(learning_rate=0.001, parameters=model.parameters())# 配置模型model.prepare(optimizer=opt, loss=nn.CrossEntropyLoss(), metrics=paddle.metric.Accuracy(topk=(1, 5)))

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/hapi/model.py:1206: UserWarning: Skip loading for linear.weight. linear.weight receives a shape [1280, 1000], but the expected shape is [1280, 10].

("Skip loading for {}. ".format(key) + str(err)))

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/hapi/model.py:1206: UserWarning: Skip loading for linear.bias. linear.bias receives a shape [1000], but the expected shape is [10].

("Skip loading for {}. ".format(key) + str(err)))

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/hapi/model_summary.py:107: UserWarning: Your model was created in static mode, this may not get correct summary information!

"Your model was created in static mode, this may not get correct summary information!"

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/nn/layer/norm.py:636: UserWarning: When training, we now always track global mean and variance.

"When training, we now always track global mean and variance.")-------------------------------------------------------------------------------

Layer (type) Input Shape Output Shape Param #

===============================================================================

Conv2D-1 [[1, 3, 32, 32]] [1, 48, 16, 16] 1,296

BatchNorm2D-1 [[1, 48, 16, 16]] [1, 48, 16, 16] 192

ConvBN-1 [[1, 3, 32, 32]] [1, 48, 16, 16] 1,488

Conv2D-2 [[1, 3, 32, 32]] [1, 48, 16, 16] 144

BatchNorm2D-2 [[1, 48, 16, 16]] [1, 48, 16, 16] 192

ConvBN-2 [[1, 3, 32, 32]] [1, 48, 16, 16] 336

ReLU-1 [[1, 48, 16, 16]] [1, 48, 16, 16] 0

RepVGGBlock-1 [[1, 3, 32, 32]] [1, 48, 16, 16] 1,824

Conv2D-3 [[1, 48, 16, 16]] [1, 48, 8, 8] 20,736

BatchNorm2D-3 [[1, 48, 8, 8]] [1, 48, 8, 8] 192

ConvBN-3 [[1, 48, 16, 16]] [1, 48, 8, 8] 20,928

Conv2D-4 [[1, 48, 16, 16]] [1, 48, 8, 8] 2,304

BatchNorm2D-4 [[1, 48, 8, 8]] [1, 48, 8, 8] 192

ConvBN-4 [[1, 48, 16, 16]] [1, 48, 8, 8] 2,496

ReLU-2 [[1, 48, 8, 8]] [1, 48, 8, 8] 0

RepVGGBlock-2 [[1, 48, 16, 16]] [1, 48, 8, 8] 23,424

BatchNorm2D-5 [[1, 48, 8, 8]] [1, 48, 8, 8] 192

Conv2D-5 [[1, 48, 8, 8]] [1, 48, 8, 8] 20,736

BatchNorm2D-6 [[1, 48, 8, 8]] [1, 48, 8, 8] 192

ConvBN-5 [[1, 48, 8, 8]] [1, 48, 8, 8] 20,928

Conv2D-6 [[1, 48, 8, 8]] [1, 48, 8, 8] 2,304

BatchNorm2D-7 [[1, 48, 8, 8]] [1, 48, 8, 8] 192

ConvBN-6 [[1, 48, 8, 8]] [1, 48, 8, 8] 2,496

ReLU-3 [[1, 48, 8, 8]] [1, 48, 8, 8] 0

RepVGGBlock-3 [[1, 48, 8, 8]] [1, 48, 8, 8] 23,616

Conv2D-7 [[1, 48, 8, 8]] [1, 96, 4, 4] 41,472

BatchNorm2D-8 [[1, 96, 4, 4]] [1, 96, 4, 4] 384

ConvBN-7 [[1, 48, 8, 8]] [1, 96, 4, 4] 41,856

Conv2D-8 [[1, 48, 8, 8]] [1, 96, 4, 4] 4,608

BatchNorm2D-9 [[1, 96, 4, 4]] [1, 96, 4, 4] 384

ConvBN-8 [[1, 48, 8, 8]] [1, 96, 4, 4] 4,992

ReLU-4 [[1, 96, 4, 4]] [1, 96, 4, 4] 0

RepVGGBlock-4 [[1, 48, 8, 8]] [1, 96, 4, 4] 46,848

BatchNorm2D-10 [[1, 96, 4, 4]] [1, 96, 4, 4] 384

Conv2D-9 [[1, 96, 4, 4]] [1, 96, 4, 4] 82,944

BatchNorm2D-11 [[1, 96, 4, 4]] [1, 96, 4, 4] 384

ConvBN-9 [[1, 96, 4, 4]] [1, 96, 4, 4] 83,328

Conv2D-10 [[1, 96, 4, 4]] [1, 96, 4, 4] 9,216

BatchNorm2D-12 [[1, 96, 4, 4]] [1, 96, 4, 4] 384

ConvBN-10 [[1, 96, 4, 4]] [1, 96, 4, 4] 9,600

ReLU-5 [[1, 96, 4, 4]] [1, 96, 4, 4] 0

RepVGGBlock-5 [[1, 96, 4, 4]] [1, 96, 4, 4] 93,312

BatchNorm2D-13 [[1, 96, 4, 4]] [1, 96, 4, 4] 384

Conv2D-11 [[1, 96, 4, 4]] [1, 96, 4, 4] 82,944

BatchNorm2D-14 [[1, 96, 4, 4]] [1, 96, 4, 4] 384

ConvBN-11 [[1, 96, 4, 4]] [1, 96, 4, 4] 83,328

Conv2D-12 [[1, 96, 4, 4]] [1, 96, 4, 4] 9,216

BatchNorm2D-15 [[1, 96, 4, 4]] [1, 96, 4, 4] 384

ConvBN-12 [[1, 96, 4, 4]] [1, 96, 4, 4] 9,600

ReLU-6 [[1, 96, 4, 4]] [1, 96, 4, 4] 0

RepVGGBlock-6 [[1, 96, 4, 4]] [1, 96, 4, 4] 93,312

BatchNorm2D-16 [[1, 96, 4, 4]] [1, 96, 4, 4] 384

Conv2D-13 [[1, 96, 4, 4]] [1, 96, 4, 4] 82,944

BatchNorm2D-17 [[1, 96, 4, 4]] [1, 96, 4, 4] 384

ConvBN-13 [[1, 96, 4, 4]] [1, 96, 4, 4] 83,328

Conv2D-14 [[1, 96, 4, 4]] [1, 96, 4, 4] 9,216

BatchNorm2D-18 [[1, 96, 4, 4]] [1, 96, 4, 4] 384

ConvBN-14 [[1, 96, 4, 4]] [1, 96, 4, 4] 9,600

ReLU-7 [[1, 96, 4, 4]] [1, 96, 4, 4] 0

RepVGGBlock-7 [[1, 96, 4, 4]] [1, 96, 4, 4] 93,312

Conv2D-15 [[1, 96, 4, 4]] [1, 192, 2, 2] 165,888

BatchNorm2D-19 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-15 [[1, 96, 4, 4]] [1, 192, 2, 2] 166,656

Conv2D-16 [[1, 96, 4, 4]] [1, 192, 2, 2] 18,432

BatchNorm2D-20 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-16 [[1, 96, 4, 4]] [1, 192, 2, 2] 19,200

ReLU-8 [[1, 192, 2, 2]] [1, 192, 2, 2] 0

RepVGGBlock-8 [[1, 96, 4, 4]] [1, 192, 2, 2] 185,856

BatchNorm2D-21 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

Conv2D-17 [[1, 192, 2, 2]] [1, 192, 2, 2] 331,776

BatchNorm2D-22 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-17 [[1, 192, 2, 2]] [1, 192, 2, 2] 332,544

Conv2D-18 [[1, 192, 2, 2]] [1, 192, 2, 2] 36,864

BatchNorm2D-23 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-18 [[1, 192, 2, 2]] [1, 192, 2, 2] 37,632

ReLU-9 [[1, 192, 2, 2]] [1, 192, 2, 2] 0

RepVGGBlock-9 [[1, 192, 2, 2]] [1, 192, 2, 2] 370,944

BatchNorm2D-24 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

Conv2D-19 [[1, 192, 2, 2]] [1, 192, 2, 2] 331,776

BatchNorm2D-25 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-19 [[1, 192, 2, 2]] [1, 192, 2, 2] 332,544

Conv2D-20 [[1, 192, 2, 2]] [1, 192, 2, 2] 36,864

BatchNorm2D-26 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-20 [[1, 192, 2, 2]] [1, 192, 2, 2] 37,632

ReLU-10 [[1, 192, 2, 2]] [1, 192, 2, 2] 0

RepVGGBlock-10 [[1, 192, 2, 2]] [1, 192, 2, 2] 370,944

BatchNorm2D-27 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

Conv2D-21 [[1, 192, 2, 2]] [1, 192, 2, 2] 331,776

BatchNorm2D-28 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-21 [[1, 192, 2, 2]] [1, 192, 2, 2] 332,544

Conv2D-22 [[1, 192, 2, 2]] [1, 192, 2, 2] 36,864

BatchNorm2D-29 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-22 [[1, 192, 2, 2]] [1, 192, 2, 2] 37,632

ReLU-11 [[1, 192, 2, 2]] [1, 192, 2, 2] 0

RepVGGBlock-11 [[1, 192, 2, 2]] [1, 192, 2, 2] 370,944

BatchNorm2D-30 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

Conv2D-23 [[1, 192, 2, 2]] [1, 192, 2, 2] 331,776

BatchNorm2D-31 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-23 [[1, 192, 2, 2]] [1, 192, 2, 2] 332,544

Conv2D-24 [[1, 192, 2, 2]] [1, 192, 2, 2] 36,864

BatchNorm2D-32 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-24 [[1, 192, 2, 2]] [1, 192, 2, 2] 37,632

ReLU-12 [[1, 192, 2, 2]] [1, 192, 2, 2] 0

RepVGGBlock-12 [[1, 192, 2, 2]] [1, 192, 2, 2] 370,944

BatchNorm2D-33 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

Conv2D-25 [[1, 192, 2, 2]] [1, 192, 2, 2] 331,776

BatchNorm2D-34 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-25 [[1, 192, 2, 2]] [1, 192, 2, 2] 332,544

Conv2D-26 [[1, 192, 2, 2]] [1, 192, 2, 2] 36,864

BatchNorm2D-35 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-26 [[1, 192, 2, 2]] [1, 192, 2, 2] 37,632

ReLU-13 [[1, 192, 2, 2]] [1, 192, 2, 2] 0

RepVGGBlock-13 [[1, 192, 2, 2]] [1, 192, 2, 2] 370,944

BatchNorm2D-36 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

Conv2D-27 [[1, 192, 2, 2]] [1, 192, 2, 2] 331,776

BatchNorm2D-37 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-27 [[1, 192, 2, 2]] [1, 192, 2, 2] 332,544

Conv2D-28 [[1, 192, 2, 2]] [1, 192, 2, 2] 36,864

BatchNorm2D-38 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-28 [[1, 192, 2, 2]] [1, 192, 2, 2] 37,632

ReLU-14 [[1, 192, 2, 2]] [1, 192, 2, 2] 0

RepVGGBlock-14 [[1, 192, 2, 2]] [1, 192, 2, 2] 370,944

BatchNorm2D-39 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

Conv2D-29 [[1, 192, 2, 2]] [1, 192, 2, 2] 331,776

BatchNorm2D-40 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-29 [[1, 192, 2, 2]] [1, 192, 2, 2] 332,544

Conv2D-30 [[1, 192, 2, 2]] [1, 192, 2, 2] 36,864

BatchNorm2D-41 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-30 [[1, 192, 2, 2]] [1, 192, 2, 2] 37,632

ReLU-15 [[1, 192, 2, 2]] [1, 192, 2, 2] 0

RepVGGBlock-15 [[1, 192, 2, 2]] [1, 192, 2, 2] 370,944

BatchNorm2D-42 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

Conv2D-31 [[1, 192, 2, 2]] [1, 192, 2, 2] 331,776

BatchNorm2D-43 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-31 [[1, 192, 2, 2]] [1, 192, 2, 2] 332,544

Conv2D-32 [[1, 192, 2, 2]] [1, 192, 2, 2] 36,864

BatchNorm2D-44 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-32 [[1, 192, 2, 2]] [1, 192, 2, 2] 37,632

ReLU-16 [[1, 192, 2, 2]] [1, 192, 2, 2] 0

RepVGGBlock-16 [[1, 192, 2, 2]] [1, 192, 2, 2] 370,944

BatchNorm2D-45 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

Conv2D-33 [[1, 192, 2, 2]] [1, 192, 2, 2] 331,776

BatchNorm2D-46 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-33 [[1, 192, 2, 2]] [1, 192, 2, 2] 332,544

Conv2D-34 [[1, 192, 2, 2]] [1, 192, 2, 2] 36,864

BatchNorm2D-47 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-34 [[1, 192, 2, 2]] [1, 192, 2, 2] 37,632

ReLU-17 [[1, 192, 2, 2]] [1, 192, 2, 2] 0

RepVGGBlock-17 [[1, 192, 2, 2]] [1, 192, 2, 2] 370,944

BatchNorm2D-48 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

Conv2D-35 [[1, 192, 2, 2]] [1, 192, 2, 2] 331,776

BatchNorm2D-49 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-35 [[1, 192, 2, 2]] [1, 192, 2, 2] 332,544

Conv2D-36 [[1, 192, 2, 2]] [1, 192, 2, 2] 36,864

BatchNorm2D-50 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-36 [[1, 192, 2, 2]] [1, 192, 2, 2] 37,632

ReLU-18 [[1, 192, 2, 2]] [1, 192, 2, 2] 0

RepVGGBlock-18 [[1, 192, 2, 2]] [1, 192, 2, 2] 370,944

BatchNorm2D-51 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

Conv2D-37 [[1, 192, 2, 2]] [1, 192, 2, 2] 331,776

BatchNorm2D-52 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-37 [[1, 192, 2, 2]] [1, 192, 2, 2] 332,544

Conv2D-38 [[1, 192, 2, 2]] [1, 192, 2, 2] 36,864

BatchNorm2D-53 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-38 [[1, 192, 2, 2]] [1, 192, 2, 2] 37,632

ReLU-19 [[1, 192, 2, 2]] [1, 192, 2, 2] 0

RepVGGBlock-19 [[1, 192, 2, 2]] [1, 192, 2, 2] 370,944

BatchNorm2D-54 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

Conv2D-39 [[1, 192, 2, 2]] [1, 192, 2, 2] 331,776

BatchNorm2D-55 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-39 [[1, 192, 2, 2]] [1, 192, 2, 2] 332,544

Conv2D-40 [[1, 192, 2, 2]] [1, 192, 2, 2] 36,864

BatchNorm2D-56 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-40 [[1, 192, 2, 2]] [1, 192, 2, 2] 37,632

ReLU-20 [[1, 192, 2, 2]] [1, 192, 2, 2] 0

RepVGGBlock-20 [[1, 192, 2, 2]] [1, 192, 2, 2] 370,944

BatchNorm2D-57 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

Conv2D-41 [[1, 192, 2, 2]] [1, 192, 2, 2] 331,776

BatchNorm2D-58 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-41 [[1, 192, 2, 2]] [1, 192, 2, 2] 332,544

Conv2D-42 [[1, 192, 2, 2]] [1, 192, 2, 2] 36,864

BatchNorm2D-59 [[1, 192, 2, 2]] [1, 192, 2, 2] 768

ConvBN-42 [[1, 192, 2, 2]] [1, 192, 2, 2] 37,632

ReLU-21 [[1, 192, 2, 2]] [1, 192, 2, 2] 0

RepVGGBlock-21 [[1, 192, 2, 2]] [1, 192, 2, 2] 370,944

Conv2D-43 [[1, 192, 2, 2]] [1, 1280, 1, 1] 2,211,840

BatchNorm2D-60 [[1, 1280, 1, 1]] [1, 1280, 1, 1] 5,120

ConvBN-43 [[1, 192, 2, 2]] [1, 1280, 1, 1] 2,216,960

Conv2D-44 [[1, 192, 2, 2]] [1, 1280, 1, 1] 245,760

BatchNorm2D-61 [[1, 1280, 1, 1]] [1, 1280, 1, 1] 5,120

ConvBN-44 [[1, 192, 2, 2]] [1, 1280, 1, 1] 250,880

ReLU-22 [[1, 1280, 1, 1]] [1, 1280, 1, 1] 0

RepVGGBlock-22 [[1, 192, 2, 2]] [1, 1280, 1, 1] 2,467,840

AdaptiveAvgPool2D-1 [[1, 1280, 1, 1]] [1, 1280, 1, 1] 0

Linear-1 [[1, 1280]] [1, 10] 12,810

===============================================================================

Total params: 23,556,330

Trainable params: 23,509,034

Non-trainable params: 47,296

-------------------------------------------------------------------------------

Input size (MB): 0.01

Forward/backward pass size (MB): 2.38

Params size (MB): 89.86

Estimated Total Size (MB): 92.25

-------------------------------------------------------------------------------/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/math_op_patch.py:298: UserWarning: <ipython-input-2-bd09d46e5add>:53 The behavior of expression A + B has been unified with elementwise_add(X, Y, axis=-1) from Paddle 2.0. If your code works well in the older versions but crashes in this version, try to use elementwise_add(X, Y, axis=0) instead of A + B. This transitional warning will be dropped in the future. op_type, op_type, EXPRESSION_MAP[method_name])) /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/math_op_patch.py:298: UserWarning: /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/metric/metrics.py:270 The behavior of expression A == B has been unified with equal(X, Y, axis=-1) from Paddle 2.0. If your code works well in the older versions but crashes in this version, try to use equal(X, Y, axis=0) instead of A == B. This transitional warning will be dropped in the future. op_type, op_type, EXPRESSION_MAP[method_name]))

# 配置数据预处理# 通道转置 + 归一化transform = Compose([Transpose(), Normalize(mean=127.5, std=127.5)])# 加载数据集train_dataset = Cifar10(mode='train', transform=transform) val_dataset = Cifar10(mode='test', transform=transform)

Cache file /home/aistudio/.cache/paddle/dataset/cifar/cifar-10-python.tar.gz not found, downloading https://dataset.bj.bcebos.com/cifar/cifar-10-python.tar.gz Begin to download Download finished

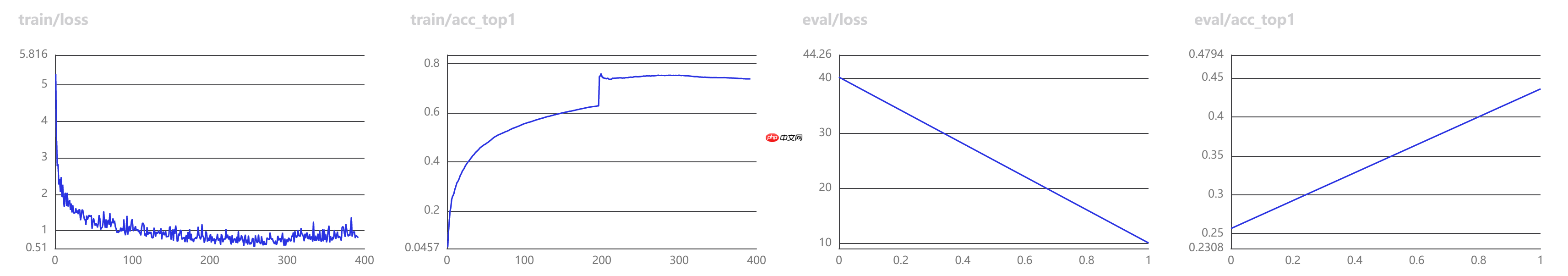

# 配置 VisualDL 可视化回调函数# 训练启动后可通过右侧可视化查看训练数据vdl_callback = paddle.callbacks.VisualDL(log_dir='log')# 模型训练# train_data 训练数据# eval_data 测试数据# batch_size 数据批大小# epochs 训练轮次# eval_freq 评测间隔# log_freq log 间隔# save_dir 保存目录# verbose log 方式# drop_last 是否丢弃末尾数据# num_workers 读取线程# callbacks 回调函数model.fit(

train_data=train_dataset,

eval_data=val_dataset,

batch_size=256,

epochs=2,

eval_freq=1,

log_freq=20,

save_dir='save_models',

save_freq=1,

verbose=1,

drop_last=False,

shuffle=True,

num_workers=8,

callbacks=vdl_callback

)The loss value printed in the log is the current step, and the metric is the average value of previous step. Epoch 1/2

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/fluid/layers/utils.py:77: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working return (isinstance(seq, collections.Sequence) and

step 196/196 [==============================] - loss: 1.0396 - acc_top1: 0.6414 - acc_top5: 0.9535 - 51ms/step save checkpoint at /home/aistudio/save_models/0 Eval begin... The loss value printed in the log is the current batch, and the metric is the average value of previous step. step 40/40 [==============================] - loss: 2.1800 - acc_top1: 0.4489 - acc_top5: 0.9243 - 34ms/step Eval samples: 10000 Epoch 2/2 step 196/196 [==============================] - loss: 0.5899 - acc_top1: 0.7437 - acc_top5: 0.9820 - 48ms/step save checkpoint at /home/aistudio/save_models/1 Eval begin... The loss value printed in the log is the current batch, and the metric is the average value of previous step. step 40/40 [==============================] - loss: 2.4723 - acc_top1: 0.4512 - acc_top5: 0.9275 - 37ms/step Eval samples: 10000 save checkpoint at /home/aistudio/save_models/final

# 标签列表classes = ['飞机', '汽车', '鸟', '猫', '鹿', '狗', '青蛙', '马', '船', '卡车']# 预测图像路径test_img_path = 'cat.jpg'# 显示预测图像display(Image(test_img_path))# 读取测试图像test_img = cv2.imread(test_img_path)# 数据预处理# 缩放 + 通道转置 + 归一化 + 新增维度test_img = cv2.resize(test_img, (32, 32))

test_img = transform(test_img)

test_img = test_img[np.newaxis, ...]# 模型预测result = model.predict_batch(test_img)# 结果后处理# 取置信度最大的标签下标 + 标签转换index = np.argmax(result)

predict_label = classes[index]# 打印结果print('该图片的预测结果为:%s' % predict_label)<IPython.core.display.Image object>

该图片的预测结果为:猫

以上就是高层 API 实现 RepVGG 模型微调的详细内容,更多请关注php中文网其它相关文章!

每个人都需要一台速度更快、更稳定的 PC。随着时间的推移,垃圾文件、旧注册表数据和不必要的后台进程会占用资源并降低性能。幸运的是,许多工具可以让 Windows 保持平稳运行。

Copyright 2014-2025 https://www.php.cn/ All Rights Reserved | php.cn | 湘ICP备2023035733号